|

|

- Search

| Obstet Gynecol Sci > Volume 66(4); 2023 > Article |

|

Abstract

Objective

Due to its comprehensive, reliable, and valid format, the objective structured clinical examination (OSCE) is the gold standard for assessing the clinical competency of medical students. In the present study, we evaluated the importance of the OSCE as a learning tool for postgraduate (PG) residents assessing their junior undergraduate students. We further aimed to analyze quality improvement during the pre-coronavirus disease (COVID) and COVID periods.

Methods

This quality-improvement interventional study was conducted at the Department of Obstetrics and Gynecology. The PG residents were trained to conduct the OSCE. A formal feedback form was distributed to 22 participants, and their responses were analyzed using a five-point Likert scale. Fishbone analysis was performed, and the ‘plan-do-study-act’ (PDSA) cycle was implemented to improve the OSCE.

Results

Most of the residents (95%) believed that this examination system was extremely fair and covered a wide range of clinical skills and knowledge. Further, 4.5% believed it was more labor- and resource intensive and time-consuming. Eighteen (81.8%) residents stated that they had learned all three domains: communication skills, time management skills, and a stepwise approach to clinical scenarios. The PDSA cycle was run eight times, resulting in a dramatic improvement (from 30% to 70%) in the knowledge and clinical skills of PGs and the standard of OSCE.

The objective structured clinical examination (OSCE), originally described by Harden in 1975 [1], is the gold standard for assessing medical students’ clinical competence in a comprehensive, planned, structured, and objective manner. It is a universal and widely accepted assessment tool. In this test, a team of examiners designated at multiple stations assesses the students’ competence in dealing with various hypothetical problems. As this test is structured and designed to assess skills, it is a more complex, resource- and time-intensive assessment exercise than traditional methods of assessment [2]. Traditionally, experienced assessors [3] can also utilize OSCE-based peers [4]. In this scenario, peers who have undergone a similar assessment in the recent past can add multiple dimensions to the exercise. Additionally, the OSCE can be used as a learning tool for young assessors who are more enthusiastic and adaptive to novel tools. Several studies have emphasized the importance of the OSCE as a learning enhancement as well as an excellent assessment method among undergraduate medical students [5-7]; however, there is a relative dearth of studies highlighting the role of postgraduate residents in enabling faculty to coordinate and organize the OSCE. Their role in arranging stations and training standardized and simulated patients is often overlooked. If this role can be defined, their activity may not only enhance the value of stations but also serve as an exercise in self-assessment, thereby adding to their existing skills on that topic.

This study was conducted to evaluate the role of the OSCE as a learning aid for near-peer assessors (postgraduate [PG] residents) while assessing junior undergraduate (UG) medical students in the Department of Obstetrics and Gynecology under the supervision of experienced assessors. At the same time, we aimed to improve the standards of the OSCE and the learning skills of PGs by involving them in the implementation of the OSCE.

Postgraduate residents posted in the Department of Obstetrics and Gynecology at a tertiary care institute in Western Rajasthan.

This study was conducted in three phases. Only final-year OSCE exams were included; formative OSCEs conducted monthly for 5th/6th-semester students were excluded. The first phase was set as the baseline assessment and measurement of problems. After the ethical approval, the first summative OSCE was conducted which provided baseline data for fishbone analysis and planning. The aim was to improve the baseline knowledge about OSCE from 30% to 70% in 3 consecutive years, as the pre-exit and exit exams were conducted once a year for both regular and detained students. Implementation was done in the next OSCE which was conducted after 1 month, and the changes were studied for 3 years. Continuous implementation and assessment were performed using five ‘plan-do-study-act’ (PDSA) cycles. Further improvements were made during the 6th PDSA cycle, mindful of the new challenges induced by the coronavirus diseas-19 (COVID-19) pandemic, which continued for four cycles.

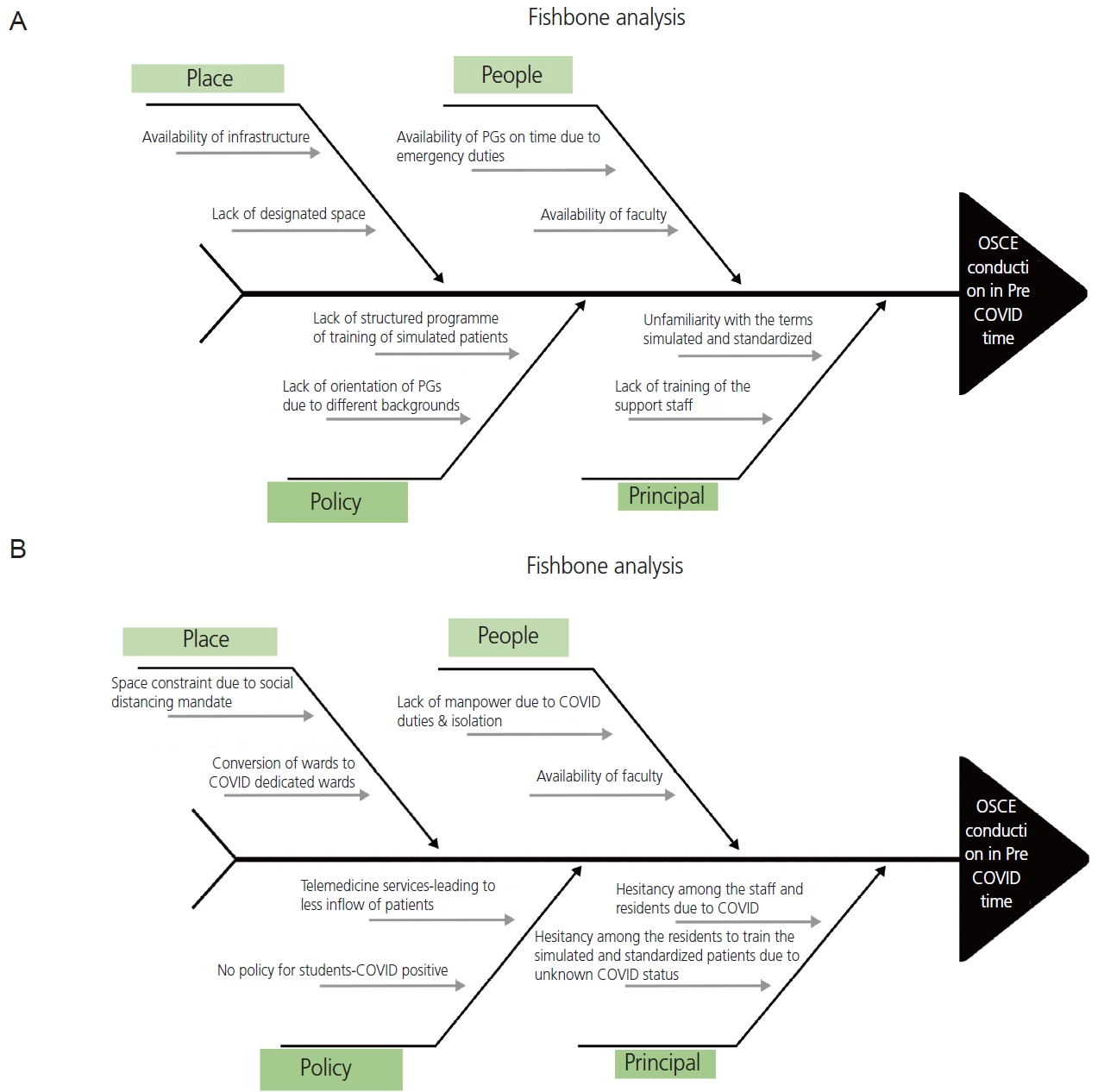

The medical curriculum comprises formative and summative assessments structured as OSCE, multiple-choice questions, theory exams, and practical viva voce. Organizing an OSCE for 100 students is a herculean task, and the students’ assessment by faculty at all stations was neither practical nor feasible. Hence, this study was designed to train postgraduate residents simultaneously with assessors (OSCE assessors) and collect their responses and feedback regarding lessons learned while conducting the OSCE and qualitative improvements that could be made in the ongoing OSCE curriculum. Because these postgraduate students assessed the undergraduate students, they were assigned the role of near peers. A baseline assessment of problems and challenges involving PGs was performed using the Fishbone method during preCOVID and COVID periods (Fig. 1).

Subsequently, a PDSA cycle was implemented. From the first PDSA cycle, we realized that it was necessary to implement a team and anticipate a plan. A team comprising two faculty members and two others, one senior resident, one 3rd year resident, one senior nursing officer in the ward, and one ward boy, was subsequently created. The meeting was conducted one week before the OSCE.

The first obstacle was the lack of sensitization of the PGs to the OSCE. As many students were unaware of the rules and regulations of the OSCE, the first task was to sensitize the PG students posted in the department to the OSCE. PGs were trained to arrange the OSCE stations for UG exams. One day prior, PGs would ensure that the standardized and non-standardized patients wore proper equipment on the day of examination, give them a short and informative description of what will happen at the station, and preferably, a detailed history to give the students.

The PGs were trained to guide the standardized patients in responding consistently and realistically. They were further guided to train the nursing staff to act as simulated patients in case of the unavailability of standardized patients and sometimes to act as assessors at select stations.

for each station This problem was addressed by creating a list of OSCE stations, the number of rest stations, residents’ names, and items and accessories required a day before the exam.

After the first PDSA cycle, we realized that the second most important obstacle was the interpretation of the checklist and student assessment. The PGs were further trained to interpret the checklist, evaluate UG examinees by observing their performance at the station, and rehearse the procedures at the OSCE stations in a stepwise manner, both before and after the exam. The briefing session was conducted before the actual OSCE assessment. Additionally, the process of critical appraisal was also practiced during the formative assessment or ward-leaving exams, when randomly selected UG students were assessed by the PG resident and the faculty to check for consistency in marking.

The OSCE assessment was conducted for 100 medical students in their final professional exams during a study period of 3 years. One senior resident and two faculty members acted as facilitators. Each exam was distributed over 3 days, with 34 students each day. Students were further divided into two groups: 15 active stations and four or five rest stations. The 15 stations for communication skills (history taking and counseling), physical examination, and practical procedures assessed three domains of learning (cognitive, psychomotor, and affective). Each station had a duration of 2 minutes. At each station, PG residents rated the candidates’ performance on checklists. One of the rotation residents was given the timekeeping task (Fig. 2).

At the end of the OSCE, the residents were interviewed about their experiences with the OSCE and lessons learned through a semi-structured online questionnaire. A five-point Likert scale was used to assess responses to the questions (strongly disagree, disagree, neutral, agree, and strongly agree). Anonymity was maintained, as students were not required to enter their name, email identifier, or any other identifiers. At the same time, the facilitators were also asked about the challenges faced.

The team members were motivated to participate in each meeting. Eight PDSA cycles were performed.

During the COVID pandemic, additional challenges were added that were analyzed in the 5th OSCE (Fig. 1B) and implemented in subsequent PDSA cycles. The major challenge was “space constraint” and maintaining social distance. To overcome this issue, the OSCE was conducted in the outpatient department (OPD) premises as maximum cases were then handled by telemedicine and physical footfall was less. Arrangements were made in accordance with social distancing and self-protection guidelines. All OPD rooms and corridors were used (Fig. 3). We increased the time between stations and decreased the number of stations. The second challenge was students’ and assessors’ exposure to patients. To overcome this, hand sanitizers were made available at all stations, and all students, assessors, and patients were provided with surgical masks and face shields. Care was taken to include only real-time polymerase chain reaction-negative patients or, in their absence, simulated patients acted out by OPD attendants only.

Continuous implementation was performed in the next two PDSA cycles.

A layperson with or without male/female own medical problems (history and physical examination findings), or a real patient who has been trained to depict a specific medical case or to play the role of a patient. In this case, real problems or those of other patients can be considered as learning content [3,8].

Professional abilities that include knowledge, skills, attitudes, and experience [9].

Defined as a student’s ability to satisfactorily deliver what is expected at a certain point in time in a standardized setting (examination setting).

Baseline assessment at the five stations revealed that most PGs were initially unaware of the terminologies used in the OSCE. They scored well on facts, but their overall assessment score, including communication skills, time management, and patient interaction, was only 30% (average score of 8 out of 25 from five stations).

Our residents were now sensitized to the OSCE and were familiar with the terms simulated and standardized patients. Their communication skills improved. Their knowledge of OSCE and their skills increased to 60%, as assessed by a random check at 5 stations after the OSCE.

Residents were trained to interpret the checklist and consistently assess the students. They were able to train their juniors on the same tasks. Their knowledge of the concepts was further strengthened, and their knowledge and overall scores increased to 70% (average score of 18 out of 25 from five stations).

During COVID, the OSCE was conducted with a different outlook after reanalysis of new challenges, and the residents participated with much greater enthusiasm.

A total of 38 OSCE sessions were conducted during the study period. Eight summative OSCEs for outgoing batches and the remaining formative assessments for 5th/6th semester students were excluded. Overall, 22 PG residents were successively trained (the number increased every 6 months) to conduct the OSCE. Formal feedback was obtained from participants at the end of the OSCE. Table 1 shows the PG students’ responses to the OSCE. Most students (95%) believed that this examination system was fair and covered a wide range of clinical skills and knowledge. However, a few (4.5%) believed that it was more labor- and resource-intensive, time-consuming, and affects the functioning of the department by hampering routine departmental activities.

Most participants found the task enjoyable, informative, and a welcome change from routine. The involvement of nursing officers at stations, such as during handwashing, drug preparation, and biomedical waste management, added a much-needed human touch to the otherwise disciplined atmosphere of assessment. Rest stations and timekeeping are crucial. Overall, 100% of the residents were happy with learning common practical methods through repeated revisions and practices. Their receptiveness to exercise could be gauged by the fact that they wanted to take OSCEs for PG examinations (Table 2). Moreover, their role modification from students to assessors encouraged even the most junior residents to actively participate in such activities, which helped them overcome their hesitations and improve their communication skills.

Eighteen (81.8%) residents admitted that they augmented all three domains (communication skills, time management skills, and stepwise approach) to clinical scenarios, while four (18.2%) believed that the stepwise approach was the major aspect that they learned.

The overall experience of the residents was good for two (9.1%), very good for 15 (68.2%), and excellent for five (22.7%) residents.

Additionally, it was encouraging to see our residents performing the same tasks (must do skills) in regular clinical postings in a methodical and stepwise manner, as assessed in their work-based assessment during clinical rounds and endsemester exams.

The following are a few remarks made by the residents after being asked an open-ended question regarding suggestions: “it’s the first time that I had attended OSCE; the questions were very good&practical, I completely enjoyed assessing my juniors”; “there should be an end semester OSCE for postgraduates as well on the basis of topics covered in class”; “managing OPD services by allocating separate junior and senior residents can be an option” and “OSCE is required more frequently”.

The OSCE comprises a series of exercises, generally of equal length, 5-10 minutes or even less, depending upon the case scenarios that test various competencies at multiple stations. These stations can be manned or unmanned. Importantly, the measurements were structured using specially prepared standardized checklists with specific instructions and training provided to the examiners. This test measures the affective, cognitive, and psychomotor domains of learning from basic to advanced levels [10]. A time allowance is generally given for candidates to move between stations, and rest stations are often interspersed to allow candidates to prepare themselves before coming to stations and to complete any incomplete responses [3]. It also permits the assessed person to break from introspecting or revising concepts. The candidates’ answers from the unmanned stations are collected on separate exam sheets, while the examiners record their marks on separate sheets.

In our study, most stations were manned, except for a few, such as drawing a partograph on a given clinical scenario or interpreting the pelvic organ prolapse quantification grid. However, the stations covered all three learning domains and included five stations for communication skills, five for practical procedures, and five for physical examination.

Three types of patients are commonly used in the OSCE: non-standardized, simulated, and standardized. Non-standardized patients were given clear instructions regarding their behaviors and interactions with the examinees in our study. Similarly, the simulated patients were carefully instructed about the condition they would present. Paper-based instructions are typically insufficient if a standardized patient must mimic a medical condition. Hence, it requires time and training. We observed that this task was performed well by the PGs in our study.

Cervical cancer is the 4th most common cancer in women worldwide, which can be prevented through appropriate screening [11]. Similarly, diabetes and antiepileptics use during pregnancy are common causes of congenital abnormalities and adverse fetal outcomes [12]. The rate of cesarean sections is increasing; therefore, counseling for vaginal births after cesarean sections is paramount [13]. Communication skill stations were used to create awareness among patients’ relatives about such common areas of concern by utilizing them as simulated patients. One of the patients’ relatives (a schoolteacher) who simulated a patient requested to participate in the OSCE for all 3 days of examination and said that she liked this process and would incorporate it in her school to improve interaction among the students with their peers, youngers, and elders.

The coordinator of an OSCE is responsible for overseeing the development, organization, administration, and grading of the examination. Male/female is responsible for directing the rotation of flow, identifying and solving logistic issues, as well as unforeseen shortages of necessary materials.

All manned stations must have trained assessors, who are typically faculty members. However, because of the limited workforce and simultaneous functioning of the department, all stations cannot be overseen by faculty members. Hence, we involved PG residents by training them as assessors and helping them conduct the OSCE. In a study by Schwill et al. [4], undergraduate medical students in 3rd or 4th years of their curriculum acted as peer assessors for first-year juniors. Other studies have documented the importance of peer assessors [14].

It is important to note that untrained assessors and those with limited involvement in exam construction or question modulation award higher marks than trained assessors [15-17]. This may be attributed to a lack of understanding of the rating criteria and poor appreciation of the exact purpose, format, and assessment scoring [16,18]. Therefore, to maintain consistency in marking, proper training was provided to PGs. Additionally, a pre-OSCE assessor accuracy and consistency check were routinely performed by having experienced and novice assessors assess a few randomly selected students during ward-leaving exams so that if more than acceptable discrepancy was present, it could be taken care of.

The selection and motivation of the support staff are equally important to ensure a successful OSCE. This can be done by giving charge to one responsible senior person, either a senior resident or PG, experienced in liaisoning logistics. Male/female can guide the support staff to resolve physical issues, such as the arrangement of tables and chairs at each station, setting up individual stations, photocopying, preparing and distributing materials, attending to the needs of examinees, examiners, and simulated patients, developing the OSCE map, setting up the bell system, developing and placing the number and arrow signage at appropriate places, arrangement of required material and equipment, and making arrangements for examinees waiting for their exam.

In addition, the support staff can help collect answer sheets from every station and examiner and cater to all personnel involved during the exam day. In our study, PGs, besides taking the responsibility of assessment, also took over the function of guiding and instructing the support staff and training them to organize the OSCE smoothly. This led to a sense of leadership in the enthusiastically working PGs, as they had the opportunity to revise and rehearse the stations.

The role of the timekeeper is to maintain the OSCE schedule using a bell that rings at precise intervals based on a stop clock. The timekeeper should remain focused and not be distracted. This task is far more important than the recognition usually assigned to it. In our study, we maintained 15 stations lasting 2-3 minutes, which was much less than that observed in the study by Majumder et al. [2]. Moreover, the break time between the two stations was 2 minutes in the aforementioned study, as compared to 30s in our study. In the original version described by Harden [1], the students moved around 18 active stations and two rest stations in a hospital ward. Each station was 4.5 minutes long, with 30 seconds break between the stations.

The feedback and suggestions provided by the young assessors were utilized to improve the OSCE stations and management plans. The OSCE ran smoother and better than the previous one with each new round. Further, our study indicates that the residents perceived the OSCE as an excellent learning tool for skill and attitude acquisition. This finding is supported by other studies that have documented OSCE as a teaching tool [19-22]. Students reported that they appreciated the opportunity to engage in constructive discussions of their strengths and weaknesses in clinical encounters, observe a variety of doctor-patient interaction styles, and practice future OSCE-type examinations.

With the declaration of the COVID pandemic in March 2020 by the World Health Organization, all students were sent home, but as the understanding of the disease gradually increased and the situation came under control, the students were called back [23]. Online classes have continued until now, and the primary challenge was how to conduct practical exams. As far as the OSCE is concerned, all challenges were handled well, and the exam was conducted as smoothly as before.

Blythe et al. [24] also attempted a virtual OSCE during the lockdown period; however, in their study, only nine subjects were assessed. Our study is unique in that it assessed the importance of PGs and their learning by conducting an OSCE, which, to the best of our knowledge, has not been extensively studied, particularly in the context of the COVID pandemic.

Overall, the residents had a positive attitude toward the OSCE. They considered this a learning tool and not a burden for conducting UG examinations. After the conduction of each OSCE, residents were able to perform ‘must do skills’ confidently in their work-based assessment. The involvement of residents in the OSCE also improved their patient interaction and communication skills. Hence, we suggest that the OSCE should be considered an important learning tool to assess competencies, and the involvement of near-peer assessors (PG students) in the OSCE can overcome human resource limitations. Furthermore, implementation of PDSA cycles must be a continuous process.

Notes

Author contributions

CS, PS, AB and SS designed the concept. CS, MJ, VM collected the clinical data and wrote the manuscript. AB, MG, GY, NKG, VM and MJ did literature review and edited the manuscript. PS and SS revised and approved the manuscript. All the authors read and approved the final manuscript.

Acknowledgments

We acknowledge Dr. Kuldeep Singh, Dean Academics, AIIMS Jodhpur for his guidance and mentorship in the field of medical Education.

Fig. 1.

(A) Fish bone analysis in the pre-COVID-19 period. (B) Fish bone analysis during the COVID-19 pandemic. PG, postgraduate; OSCE, objective structured clinical examination; COVID-19, coronavirus disease-2019.

Fig. 2.

Conducting the OSCE in the pre-COVID-19 era. OSCE, objective structured clinical examination; COVID-19, coronavirus disease-2019.

Fig. 3.

(A) Conducting the OSCE during the COVID-19 pandemic; (b) hospital staff trained as a simulated patient during the COVID- 19 pandemic. OSCE, objective structured clinical examination; COVID-19, coronavirus disease-2019.

Table 1.

Responses of post graduate students about OSCE

Table 2.

Responses of postgraduate students about their learning experience from OSCE

References

1. Harden RM, Stevenson M, Downie WW, Wilson GM. Assessment of clinical competence using objective structured examination. Br Med J 1975;1:447-51.

2. Majumder MAA, Kumar A, Krishnamurthy K, Ojeh N, Adams OP, Sa B. An evaluative study of objective structured clinical examination (OSCE): students and examiners perspectives. Adv Med Educ Pract 2019;10:387-97.

3. Bhuiyan P, Supe A, Rege N. The art of teaching medical students-e-book 3rd ed. New Delhi: Elsevier Health Sciences; 2015.

4. Schwill S, Fahrbach-Veeser J, Moeltner A, Eicher C, Kurczyk S, Pfisterer D, et al. Peers as OSCE assessors for junior medical students - a review of routine use: a mixed methods study. BMC Med Educ 2020;20:17.

5. Khan FA. Objective structured clinical examinations (OSCEs) as assessment tools for medical student examination. Telangana J psychiatry 2016;2:17-21.

6. Alkhateeb NE, Al-Dabbagh A, Ibrahim M, Al-Tawil NG. Effect of a formative objective structured clinical examination on the clinical performance of undergraduate medical students in a summative examination: a randomized controlled trial. Indian Pediatr 2019;56:745-8.

7. Gilson GJ, George KE, Qualls CM, Sarto GE, Obenshain SS, Boulet J. Assessing clinical competence of medical students in women’s health care: use of the objective structured clinical examination. Obstet Gynecol 1998;92:1038-43.

8. Beigzadeh A, Bahmanbijri B, Sharifpoor E, Rahimi M. Standardized patients versus simulated patients in medical education: are they the same or different. J Emerg Pract Trauma 2016;2:25-8.

9. Ware J, Mardi AE, Abdulghani H, Siddiqui I. Objective structured clinical examination (OSCE manual 2014) [Internet]. Riyadh: Saudi Commission for Health Specialities; c2014 [cited 2023 Apr 27]. Available from: https://pdf4pro.com/amp/view/objective-structured-clinicalexamination-scfhs-52868d.html.

10. Khan KZ, Ramachandran S, Gaunt K, Pushkar P. The objective structured clinical examination (OSCE): AMEE guide no. 81. Part I: an historical and theoretical perspective. Med Teach 2013;35:1437-46.

11. Ghalavandi S, Heidarnia A, Zarei F, Beiranvand R. Knowledge, attitude, practice, and self-efficacy of women regarding cervical cancer screening. Obstet Gynecol Sci 2021;64:216-25.

12. Lee KH, Han YJ, Chung JH, Kim MY, Ryu HM, Kim JH, et al. Treatment of gestational diabetes diagnosed by the IADPSG criteria decreases excessive fetal growth. Obstet Gynecol Sci 2020;63:19-26.

13. Kim HY, Lee D, Kim J, Noh E, Ahn KH, Hong SC, et al. Secular trends in cesarean sections and risk factors in South Korea (2006-2015). Obstet Gynecol Sci 2020;63:440-7.

14. Kim KJ, Kim G. The efficacy of peer assessment in objective structured clinical examinations for formative feedback: a preliminary study. Korean J Med Educ 2020;32:59-65.

15. Hope D, Cameron H. Examiners are most lenient at the start of a two-day OSCE. Med Teach 2015;37:81-5.

16. Stroud L, Herold J, Tomlinson G, Cavalcanti RB. Who you know or what you know? Effect of examiner familiarity with residents on OSCE scores. Acad Med 2011;86:S8-11.

17. Pell G, Homer MS, Roberts TE. Assessor training: its effects on criterion‐based assessment in a medical context. Int J Res Method Educ 2008;31:143-54.

18. Iramaneerat C, Yudkowsky R. Rater errors in a clinical skills assessment of medical students. Eval Health Prof 2007;30:266-83.

19. Saroja C, Sathyasree C, Santa Kumari A, Padmini O. Student perception of OSCE as a learning tool in osmania medical. Applied Physiology and Anatomy Digest 2018;3:24-8.

20. Müller S, Koch I, Settmacher U, Dahmen U. How the introduction of OSCEs has affected the time students spend studying: results of a nationwide study. BMC Med Educ 2019;19:146.

21. Brazeau C, Boyd L, Crosson J. Changing an existing OSCE to a teaching tool: the making of a teaching OSCE. Acad Med 2002;77:932.

22. Chong L, Taylor S, Haywood M, Adelstein BA, Shulruf B. The sights and insights of examiners in objective structured clinical examinations. J Educ Eval Health Prof 2017;14:34.

23. World Health Organization (WHO). Coronavirus disease (COVID-19) pandemic [Internet]. Geneva: WHO; c2023 [cited 2023 Apr 26]. Available from: https://www.who.int/europe/emergencies/situations/covid-19.

- TOOLS